— DeepAI colorization

— Me@2020-11-25 04:39:20 PM

.

.

2020.11.25 Wednesday (c) All rights reserved by ACHK

A First Course in String Theory

.

2.3 Lorentz transformations, derivatives, and quantum operators.

(b) Show that the objects transform under Lorentz transformations in the same way as the

considered in (a) do. Thus, partial derivatives with respect to conventional upper-index coordinates

behave as a four-vector with lower indices – as reflected by writing it as

.

~~~

…

Denoting as

is misleading, because that presupposes that

is directly related to the matrix

.

To avoid this bug, instead, we denote as

. So

…

Using the Kronecker Delta and Einstein summation notation, we have

So

In other words,

— Me@2020-11-23 04:27:13 PM

.

One defines (as a matter of notation),

and may in this notation write

Now for a subtlety. The implied summation on the right hand side of

is running over a row index of the matrix representing . Thus, in terms of matrices, this transformation should be thought of as the inverse transpose of

acting on the column vector

. That is, in pure matrix notation,

— Wikipedia on Lorentz transformation

.

So

In other words,

.

Denote as

In other words,

.

The Lorentz transformation:

.

.

.

.

Now we consider as a function of

‘s:

Since ‘s and

‘s are related by Lorentz transform,

is also a function of

‘s, although indirectly.

For notational simplicity, we write as

Since is a function of

‘s, we can differentiate it with respect to

‘s.

Since

,

Therefore,

It is the same as the Lorentz transform for covariant vectors:

— Me@2020-11-23 04:27:13 PM

.

.

2020.11.24 Tuesday (c) All rights reserved by ACHK

In physics, a global symmetry is a symmetry that holds at all points in the spacetime under consideration, as opposed to a local symmetry which varies from point to point.

Global symmetries require conservation laws, but not forces, in physics.

— Wikipedia on Global symmetry

.

.

2020.11.22 Sunday ACHK

無額外論 7

.

The one in the mirror is your Light.

— Me@2011.06.24

.

Thou shalt have no other gods before Me.

— one of the Ten Commandments

.

God teach you through your mind; help you through your actions.

— Me@the Last Century

.

.

2020.11.21 Saturday (c) All rights reserved by ACHK

機遇創生論 1.6.6 | 十年 3.4

.

通用的極致,就是專業。而通用發展成極致的方法,有很多種。每種極致,就自成一門專業。通用是樹幹;專業是樹支。一支樹支的強壯與否,並不能保證,另一支樹支的健康。

那是否就代表,學生時代以後,就毋須再發展,通用知識或通用技能呢?

.

學生時代以後,工作時代中,仍然需要發展,通用知識或通用技能,從而維持自己的智能和體能,以防退化。例如,剛才所講的智力遊戲,我認為可以,避免老人家的腦部退化。

我那樣認為,是因為我看過一些老人家的訪問。有一位九十歲的女仕說,她透過玩手提遊戲機,保持頭腦的清醒靈活。

即使還未老年,只是步入中年,也應該多加留意,智能或體能上的退化。玩適當類型的電腦遊戲,可以保持反應的靈敏。什麼類型呢?

電腦遊戲有很多類型,主要有「建構」、「解謎」和「戰鬥任務」三種。「戰鬥任務」就可用來鍛鍊反應。那些遊戲會放你於,危險和緊急的處境。你當時必須有,敏捷和精準的身手,才可以完成任務,然後脫險。換句話說,那些遊戲亦同時訓練你,控制自己的心理,駕馭自己的恐懼。

同理,雖然一般的運動本身,例如掌上壓,並沒有什麼所謂的「用途」,但是,你正正需要這些「無用」的運動,去維持體能。

無論是體能和智能,如果沒有強大而穩定的主幹,就不會有健康的分支和茂盛的樹葉。

— Me@2020-11-16 04:55:18 PM

.

.

2020.11.20 Friday (c) All rights reserved by ACHK

Structure and Interpretation of Classical Mechanics

.

Ex 1.10 Higher-derivative Lagrangians

Derive Lagrange’s equations for Lagrangians that depend on accelerations. In particular, show that the Lagrange equations for Lagrangians of the form with

terms are

In general, these equations, first derived by Poisson, will involve the fourth derivative of . Note that the derivation is completely analogous to the derivation of the Lagrange equations without accelerations; it is just longer. What restrictions must we place on the variations so that the critical path satisfies a differential equation?

Varying the action

.

Let .

.

Chain rule of functional variation

Since variation commutes with integration,

By the chain rule of functional variation:

If is path-independent,

But is path-independent?

The is path-dependent. Its input is a path

, not just

, the value of

at the time

. However,

itself is a path-independent function, because its input is not a path

, but a quadruple of values

.

Since is path-independent,

Since and

,

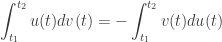

Here is a trick for integration by parts:

As long as the boundary term

,

So if and

,

Since and

,

By the principle of stationary action, . So

Since this is true for any function that satisfies

and

,

.

Note:

The notation of the path function is

, not

.

The notation means that

takes a path

as input. And then returns a path-independent function

, which takes time

as input, returns a value

.

The other notation makes no sense, because

takes a path

, not a value

, as input.

— Me@2020-11-11 05:37:13 PM

.

.

2020.11.11 Wednesday (c) All rights reserved by ACHK

Logical arrow of time, 4.2

.

Memory is of the past.

The main point of memories or records is that without them, most of the past microstate information would be lost for a macroscopic observer forever.

For example, if a mixture has already reached an equilibrium state, we cannot deduce which previous microstate it is from, unless we have the memory of it.

This work is free and may be used by anyone for any purpose. Wikimedia Foundation has received an e-mail confirming that the copyright holder has approved publication under the terms mentioned on this page.

.

memory/record

~ some of the past microstate and macrostate information encoded in present macroscopic devices, such as paper, electronic devices, etc.

.

How come macroscopic time is cumulative?

.

Quantum states are unitary.

A quantum state in the present is evolved from one and only one quantum state at any particular time point in the past.

Also, that quantum state in the present will evolve to one and only one quantum state at any particular time point in the future.

.

Let

= a past time point

= now

= a future time point

Also, let state at time

evolve to state

at time

. And then state

evolves to state

at time

.

.

State has one-one correspondence to its past state

. So for the state

, it does not need memory to store any information of state

.

Instead, just by knowing that microstate is

, we already can deduce that it is evolved from state

at time

.

In other words, microstate does not require memory.

— Me@2020-10-28 10:26 AM

.

.

2020.11.02 Monday (c) All rights reserved by ACHK

You must be logged in to post a comment.