The initial microstates should be averaged, because it forms an ensemble for the initial macrostate.

Note that a macrostate is actually one particular microstate, not a collection of microstates; it is just that we don’t know which particular microstate.

But how come the final possible states should be summed over, not be averaged?

— Me@2013-08-13 05:16 PM

.

a macrostate = (a microstate in) a set of macroscopically-indistinguishable microstates

— Me@2022-01-09 07:43 AM

Note that, by definition, two macroscopically-indistinguishable microstates will never separate into two distinct macrostates.

— Me@2022-04-14 05:55 PM

.

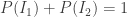

The initial macrostate is with probability one, because it is already known. So the summation of the probabilities of all possible mutually exclusive initial microstates that are corresponding to that initial macrostate is one, such as

.

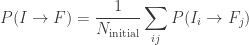

By definition, the final macrostate is not known yet. Each possible final macrostate is not with probability one.

The probability of getting a particular final macrostate from that initial macrostate is the summation of the probabilities of all possible mutually exclusive final microstates that are corresponding to that final macrostate.

— Me@2022-04-13 01:09 PM

.

The only assumptions I made are those about the addition of probabilities of assumptions and their effects – and these logical rules are fundamentally asymmetric when it comes to the role of the assumptions and their consequences. This logical arrow of time can’t be removed from any reasoning about a world that depends on time – time only copies the logical relationship of implication. And this logical arrow of time is the source of the thermodynamic arrow of time as well.

— edited Feb 2, 2011 at 15:23

— answered Jan 14, 2011 at 11:42

— Luboš Motl

— Calculation of the cross section

— Physics StackExchange

.

.

2022.04.14 Thursday (c) All rights reserved by ACHK

You must be logged in to post a comment.